I recently started working on a project migrating all the SQL Server regular service accounts to Group Managed Service Accounts (gMSA) for both SQL Server and SQL Server Agent accounts.

A Service Account is something that applications like the SQL Server, IIS, or scheduled tasks need to run under using Microsoft Active Directory. These are the regular domain user accounts, where the passwords needs to be manually managed and rotated. As these needs to be manually updated, downtime to the services are required if the password needs to be changed. Not only that but syncing these passwords across multiple servers can be an issue. This problem is resolved by using the Standalone Managed Service Account as Windows can manage the password automatically.

What is a Standalone Managed Service Account (sMSA) and a Group Managed Service Account?

Both the sMSA (Introduced in Windows Server 2008 R2) and gMSA (Introduced in Windows Server 2012) are managed domain account that provides automatic password management, simplified service principal name (SPN) management, and the ability to delegate the management to other administrators. The main difference is that an sMSA account can run the service on a single Server, whereas a gMSA account can manage a single account across multiple Servers.

Group Managed Service Account (gMSA)

A gMSA works just like the sMSA but can be used by multiple Servers. The password is automatically managed and updated every 30 days by default. These are best for password management and best for the server farms, web farms, distributed applications or load-balancing applications. Passwords are managed by Microsoft Key Distribution Service (KDS) where the domain controller rotates the account password and is securely used by the authorized servers. The password is very secure as no one can see it and not visible to anyone. Easy for the auditing as well as these passwords will be automatically updated without any manual work.

Minimum requirement to use gMSA

- Can only be used on Windows Server 2012 or later

- Active Directory Domain Services

- Creation of a KDS root key for password generation

Preferred Naming convention for the gMSA accounts for SQL Server

Note: gMSA Service Account can’t exceed 15 characters including the $ symbol which needs to be included at the end of every gMSA account.

Server service account: Domain\ + gms + {func} + {env} + sql

Server Agent service account: Domain\ + gms +{func} + {env} + sqlagt

where

{func} can be the primary function of the Server. This can be a 3 character limit.

{env} can be the 3 character enviroment like DEV, TST, QA, PRD

Note: If you wanted to implement the gMSA Service accounts for new SQL Servers, these Windows Servers first need to be built before requesting the gMSA Service accounts to your Server Engineers. They will be creating these gMSA Service Accounts for you.

What SQL Servers can use gMSA?

SQL Server standalone or SQL Servers on multiple nodes in a cluster or Always on Availability Groups can use gMSA Service Accounts.

Prerequisite Steps Before the Service Account Migration to gMSA Service Account on a Server

- Document the SPN details of the old Service account so we can migrate the same SPN’s to the new gMSA Service accounts. This is an important step because you need to have these details to migrate over these to the new gMSA and in case if you need to revert back, you need to have this list readily available. Remind your Server Engineer to place a copy of the SPN’s.

- Copy over the delegation and file share (FS) groups from the old SQL server engine account to the new gMSA Service accounts.

- Copy over the SQL permissions from the old Service Account to the new gMSA service account.

- Grant Windows permissions on the Data Domain shares, data, log and backup directories, registry keys (if applicable), Endpoint access if Availability groups are used, local group memberships, application specific permissions (if applicable) to the gMSA service accounts.

- Replicate the permissions from the existing service account to the corresponding gMSA service accounts if there are any scheduled tasks that needs be run under.

- Log on as a Service permissions, as this permission is required to run Windows Services. Without this permission, the SQL Server Service will fail to start.

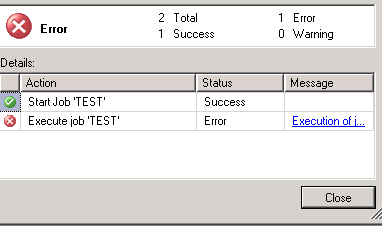

- Log on as a batch job for the SQL Server agent gMSA account for the SQL Agent jobs, scheduled tasks or any automated scripts.

Migration Steps for a Standalone SQL Server

Change Service Accounts on SQL and Restart the Services.

- RDP into the SQL Server and search for “SQL Server configuration manager”. Do not change the Service account in Services.msc

- Under SQL Server Services, right click the SQL Server (MSSQLSERVER) and Stop the services.

- Open SQL Server Configuration Manager.

- Right-click SQL Server (MSSQLSERVER) and select Properties.

- Click the Log On tab.

- Change Account Name to: domain\gms{func}{env}sql$ including the $ at the end of the account.

- Leave the password section blank. You do not have to enter anything into it.

- Click Apply. The automated password field will populate automatically.

- Then click OK.

- Repeat process for SQL Server Agent (MSSQLSERVER)

- Right click on the SQL Server and SQL Server Agent service, and select Start.

Copying SPN’s to the New gMSA Service Account

Request the Server Engineering team to copy the SPN’s from regular Service account to gMSA SQL Server service account.

Test the SQL Server Connectivity

- Open SQL Server management studio

- Test the Linked Servers using the dbatools cmd command:

Test-DbaLinkedServerConnection -SqlInstance instancename

Limitations:

SQL Server Reporting Services (SSRS) does not support running under a Group Managed Service Account (gMSA). Domain Service account is commonly used for SSRS.

If you are planning to use the gMSA for any other third party applications, you need to make sure those applications support gMSA. The best way to find that out is to contact the application support. For example, we migrated the Solarwinds DPA to gMSA without issues, but backup tools like PPDM don’t support gMSA Service accounts.

In the Part 2 of this series, I will write about how to migrate the regular Service account to gMSA Service account for Fail Over Cluster Instances (FCI) and for the SQL Server accounts using High Availability Groups.

Thanks for reading!

Resources:

Manage group Managed Service Accounts