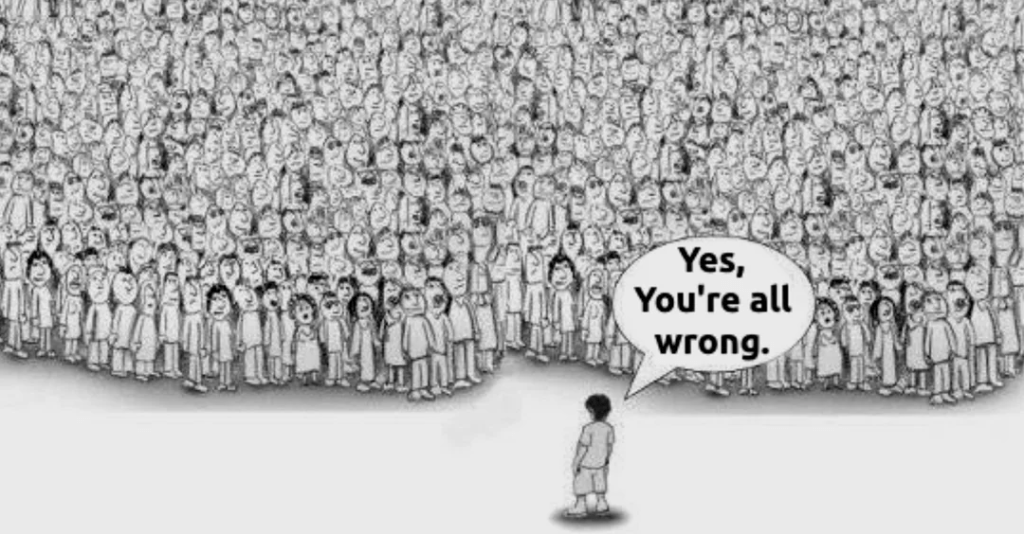

Have you noticed how quickly we label things as “good” or “bad” when someone asks for our opinion? Almost like those are the only two options available.

We’ve been conditioned to think in binaries, right or wrong, black or white. Somewhere along the way, we forgot there’s another option: staying neutral.

Think about a common situation. A friend comes to you upset, venting about something that happened with another person, and asks what you think. Without realizing it, you often form an opinion based only on what they’ve told you. If they’re upset, you tend to side with them and feel that same frustration. If they’re happy, you mirror that too. Even though you weren’t there and don’t know the full story, you instinctively support your friend, because that’s what friends do, right?

I’ve been in that position many times. Honestly, I don’t enjoy being put on the spot like that. But more often than not, I’ve found myself nodding along, agreeing, and forming opinions without having the full picture. Sometimes, that even led to developing negative feelings toward someone I barely knew, just based on one side of the story. And it all happened without much conscious thought.

The real issue here is subtle but important: we can unknowingly absorb and adopt other people’s perspectives as our own. Even the person venting may not realize they’re spreading that influence. It’s all unconscious, but it shapes how we see others. And this doesn’t just happen once. Situations like this come up all the time in everyday life. Whenever someone asks you to choose between limited options, yes or no, right or wrong, remember that you’re not obligated to pick one. There’s always another choice: you can say you don’t have an opinion. You can stay Neutral.

That’s something I learned the hard way. Small moments like these can have a bigger impact than we realize.

So the next time you’re asked for your opinion, pause for a second. You don’t always have to take a side. Choosing to stay neutral is a valid option, and often the most peaceful one.

That’s all from me today. Thanks for reading!